Insights, Breakthroughs, and Stories from Sony AI

Explore how our teams are pushing the boundaries of artificial intelligence.

Explore Our Global Impact

Every day, our globally recognized researchers explore the most complex questions in AI. Our mission—to unleash human imagination and creativity—shapes a distinct perspective, one that opens new creative pathways while honoring past innovation and expanding the boundaries of what is possible.

Creating an Ecosystem of Fairness and Protection with AI

Today’s narratives would have you believe that AI’s only role is in creating content that takes advantage of and replaces human creativity. Current opt-out systems place the burden on creators to proactively restrict the use of their work—a near-impossible task given how widely content is distributed online. Additionally, those opt-out signals are frequently ignored or circumvented by AI developers.

Our researchers are working to develop AI technologies and new breakthroughs in AI science that use the unique power of artificial intelligence to empower artists and rights holders with tools that protect past and future creative pursuits.

Sony AI’s researchers are crafting blueprints for AI technologies that use the unique power of artificial intelligence to help artists and rights holders understand when and how their work appears in generated music, and to enable the creation of tools that support attribution and protection at scale. Recent work opens a discussion about "nuanced opt-in," which flips the default of "opt-out" systems, so no work would be used unless a creator explicitly permits it. We're also exploring the need for attribution by design, rethinking the way artists are compensated in a world of AI-generated content.

Research Highlights: AI Ethics

At Sony AI, our AI Ethics team has spent years orienting our research to challenge the status quo of dataset construction.

Our work has shown how existing human-centric computer vision datasets often rely on non-consensual web scraping, lack critical demographic metadata, and perpetuate representational gaps. This leads to models that can be both unfair and unreliable (Andrews et al., Ethical Considerations for Responsible Data Curation, NeurIPS 2023).

We’ve also highlighted a deeper paradox: Protecting privacy by limiting data collection can sometimes leave marginalized groups “unseen,” yet this very absence increases the risk of being “mis-seen” by AI systems—misclassified, misrecognized, or misrepresented (Xiang, Being Seen Versus Mis-Seen, Harvard JOLT 2022). The harms of invisibility and mis-visibility are inseparable, and solving them requires a shift in how datasets are built.

We argue that fairness cannot be treated as an afterthought. From our ethical audits to our analysis of how demographic data shapes bias detection, our research has made clear that datasets must be designed with purpose, consent, and diversity from the outset (What if Fairness Started at the Dataset Level? 2025).

Together, these works revealed not only the importance of ethical data collection but also the difficulty of reconciling best practices, conflicting priorities, and technical specifications of fairness.

This is evidenced in The Fair Human-Centric Image Benchmark (FHIBE). FHIBE’s dataset comprises 10,318 consensually sourced images of 1,981 unique subjects, each with extensive and precise annotations. These annotations capture demographic and physical attributes, environmental factors, and camera settings, all of which enable nuanced assessments of fairness and bias across a wide range of demographic attributes and their intersections. The images were collected from subjects in over 81 countries/regions, making it one of the most globally diverse and most comprehensively annotated datasets in existence.

FHIBE: Bias and Fairness

Sony AI’s FHIBE provides the first globally diverse, consensual dataset to build fairer, more transparent computer vision technologies.

Protective AI, Music Attribution, IP-Level Tracking

New research papers accepted to NeurIPS, ICML, and Interspeech 2025 are focused on musical integrity in the age of machine learning, designed to explore attribution, recognition, and protection.

Research Highlights: AI for Creators

This research is part of a growing body of work exploring how AI can unlearn what doesn’t belong to it, how connections between musical segments can be identified, and how effective current audio authentication methods are in verifying a track’s integrity after typical alterations, such as compression or file conversion.

Memorization

To protect licensed data, Sony AI researches ways to prevent verbatim memorization in LLMs. This ensures AI remains a creative partner rather than a duplicator.

Related Resources

Learning Play, Not Just Prediction

From sentiment analysis to interactive robotics, AI tackles a range of challenges. While some tasks involve parsing patterns or generating outputs from static data, others require systems to make decisions, adapt in real time, and learn from experience. This is where reinforcement learning thrives: at the core of learning how to act, not just predict.

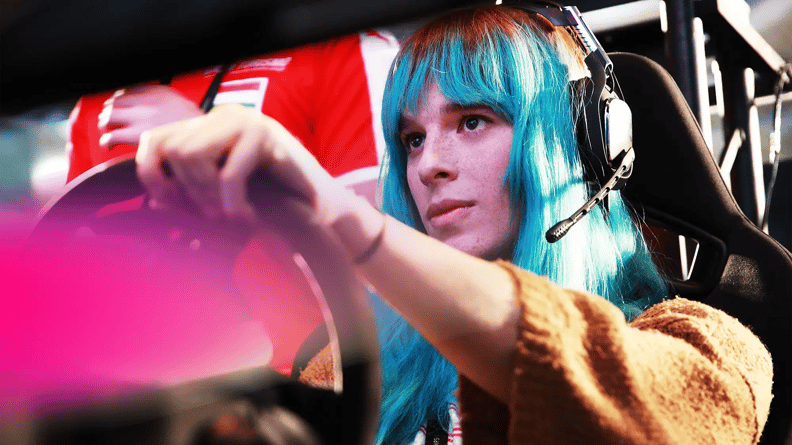

To research and develop GT Sophy, we collaborated directly with Kazunori Yamauchi, CEO of video game studio Polyphony Digital, known for creating the popular racing simulation game Gran Turismo.

Yamauchi-san and his team were involved from the beginning of the project and through every stage, helping our team define the challenge we wanted to address, research the utility of our novel deep reinforcement learning within Gran Turismo, assess how human players responded and performed against GT Sophy, and release GT Sophy as a feature within Gran Turismo back in late 2023.

What started out as a grand challenge to create an AI agent that could beat the world’s best Gran Turismo drivers quickly evolved into a quest for our team to help game developers deliver new and exciting gaming experiences to players around the world through AI.

Research Highlights: Gaming & Robotics

These breakthroughs come from our core research in gaming and robotics. Our teams use deep reinforcement learning and sophisticated sensing to move beyond virtual simulation and into the physical world, analyzing agents that compete and collaborate at advanced levels.

GT Sophy: Interactive RL

Sony AI's GT Sophy evolves reinforcement learning from pure competition to sportsmanship. Integrated into Gran Turismo 7, this agent delivers realistic, collaborative, and human-centric racing experiences.

Beyond Simulation: Real-World RL

Our research shifts AI from virtual benchmarks to the physical world. By combining robotics and sensing, Sony AI demonstrates that agents can achieve expert-level performance in unpredictable, adversarial environments.

Collaborative Human-AI Play

Focusing on "Learning Play," Sony AI develops agents that augment the human experience. These systems prioritize player engagement and refined interaction over simple algorithmic dominance.

Amplifying Creativity

By expanding what AI can see, hear, and translate, we are researching new tools creators can use to explore new pathways to amplify creativity and efficiency. Our work comes to play in audio, sound creation, and seamless multilingual communication.

In many ways, AI—especially generative AI—remains in its infancy. Researchers, AI developers, and creators are working to understand how this technology will influence the creative process.

Because many questions still remain around real-time efficiency and controllability, AI researchers and developers need to dedicate significant time and resources to understanding this.

From our perspective at Sony AI, we are working to understand how AI influences the work of artists—not only from a technical or process perspective, where it can be a tool they choose to utilize, but also in how it helps them push boundaries to explore new mediums or genres, as well as how it affects their rights and the ways we can protect them.

Research Highlights: Imaging, Sensing, and Music

Much of our research in this area focuses on imaging, sensing, and music. Our teams refine how AI interprets the world and generate high-quality content, providing creators with tools that act as extensions of their craft.

MMAudio: Video-to-Audio Synthesis

Sony AI’s MMAudio generates synchronized sound from video. Using deep learning, it aligns audio with visual timing, providing creators with a contextually accurate sound-design tool.

Similar Sound Search: Creative Workflow

In collaboration with Audiokinetic, Sony AI launched Similar Sound Search. This tool allows designers to find assets by example or text, streamlining the discovery process.

Controllability in AI for Creators

Sony AI’s Flagship team enhances real-time controllability for professionals. These tools ensure AI acts as an intuitive partner, providing granular control over music and 3D creation.

Sound Engineering & DisMix

Addressing pitch and timbre, Sony AI’s DisMix and VRVQ research advances audio mixing. These innovations offer professionals precise control, pushing the boundaries of digital artistry.

Our Biggest Breakthroughs in AI Research

Discover our creation of FHIBE, a publicly available human image dataset implementing best practices for consent, privacy, compensation, safety, diversity, and utility.

OUR WORK

Introducing FHIBE: A Consent-Driven Benchmark for AI Fairness Evaluation

Sony AI’s Fair Human-Centric Image Benchmark (FHIBE) is the first publicly available globally diverse, consensually collected fairness evaluation dataset for a wide variety of human-centric computer vision tasks.

OUR WORK

Beyond Skin Tone: A Multidimensional Measure of Apparent Skin Color

As part of this project, our researchers strive to measure apparent skin color in computer vision, beyond a unidimensional scale on skintone.

Unlocking the Future of Video-to-Audio Synthesis: Inside the MMAudio Model

MMAudio is an advanced video-to-audio synthesis model designed to convert visual content into immersive, contextually accurate audio. This model excels at producing high-quality sound that aligns seamlessly with visual components, actions, and settings of source videos, all while preserving temporal coherence. The model utilizes a cutting-edge deep learning framework tailored for video-to-audio synthesis. By leveraging advanced neural networks and temporal processing, it analyzes visual data to create audio that seamlessly aligns with content.

Latest Updates from Sony AI

Stay current with our latest news, media coverage, and technical insights. Explore the research and people driving our mission forward.

SONY AI

Sony AI’s Contributions at AAAI 2026