Advancing AI: Highlights from March

Sony AI

April 1, 2026

This month, Sony AI's work spans the foundations of generative models and the frontiers of audio and signal processing research. More than 10 papers have been accepted to ICASSP 2026 in Barcelona, covering music structure analysis, audio-visual generation, sound separation, Foley synthesis, and speech processing. Sony AI researcher Chieh-Hsin "Jesse" Lai—alongside Yang Song, Dongjun Kim, and Stefano Ermon—has written “The Principles of Diffusion Models,” a new book that traces the shared mathematical structure underlying DDPMs, score-based models, and flow-based methods. And in the news, Alice Xiang has been recognised in “AI Magazine's” Top 100 Women in AI for 2026, and joined the “Me, Myself and AI” podcast to discuss FHIBE, Sony AI's consent-driven benchmark for evaluating bias in computer vision.

Here is a look at the work that defined the month.

Featured Blog

On Writing The Principles of Diffusion Models — A Q&A With Sony AI Researcher, Jesse Lai

Diffusion models have become one of the most widely used approaches for high-quality generation across audio, images, and beyond. But as the field has grown rapidly, so has its complexity—different communities have arrived at similar ideas through different routes, producing a landscape of overlapping terminology, notation, and frameworks that can be difficult to navigate.

The Principles of Diffusion Models is an attempt to bring clarity to that landscape. Written by Sony AI researcher Chieh-Hsin "Jesse" Lai alongside Yang Song, Dongjun Kim, and Stefano Ermon, the book traces the shared mathematical foundations underlying seemingly disparate approaches, from DDPMs and score-based models to flow-based methods, and shows how they converge on the same core principles.

In this Q&A, Jesse walks through how the book came together, what he wants readers to be able to do after finishing it, and why he believes the underlying ideas in this field tend to outlast the techniques built on top of them.

Read the full interview:

On Writing The Principles of Diffusion Models, A Q&A With Sony AI Researcher, Jesse Lai

Sony AI At Global Events

ICASSP 2026 Preview

The IEEE International Conference on Acoustics, Speech, and Signal Processing (ICASSP) conference takes place May 4–8 2026 in Barcelona, Spain, and Sony AI will arrive with a strong slate of accepted papers.

This year's accepted work spans a wide range of research directions, including:

Do Foundational Audio Encoders Understand Music Structure?

Keisuke Toyama, Zhi Zhong, Akira Takahashi, Shusuke Takahashi, Yuki Mitsufuji

Summary: An investigation into how pretrained foundational audio encoders perform on music structure analysis, examining the impact of learning methods, training data, and model context length. The study finds that self-supervised learning with masked language modeling on music data is particularly effective for this task.

SAVGBench: Benchmarking Spatially Aligned Audio-Video Generation

Kazuki Shimada, Christian Simon, Takashi Shibuya, Shusuke Takahashi, Yuki Mitsufuji

Summary: Addressing the gap in multimodal generative models that overlook spatial alignment between audio and visuals, this work establishes a new research direction and benchmark, including a novel spatial audio-visual alignment metric.

Towards Blind Data Cleaning: A Case Study in Music Source Separation

Azalea Gui, Woosung Choi, Junghyun Koo, Kazuki Shimada, Takashi Shibuya, Joan Serrà, Wei-Hsiang Liao, Yuki Mitsufuji

Summary: Training data quality is critical for music source separation models, but datasets are often corrupted by hard-to-detect artifacts. This paper proposes and evaluates two noise-agnostic cleaning methods that don't require knowing the type of contamination in advance.

MMAudioSep: Taming Video-to-Audio Generative Models Towards Video/Text-Queried Sound Separation

Akira Takahashi, Shusuke Takahashi, Yuki Mitsufuji

Summary: Introducing a generative model for sound separation built on a pretrained video-to-audio model, demonstrating that foundational audio generation models can be efficiently adapted for downstream tasks.

FlashFoley: Fast Interactive Sketch2Audio Generation

Zachary Novack, Koichi Saito, Zhi Zhong, Takashi Shibuya, Shuyong Cui, Julian McAuley, Taylor Berg-Kirkpatrick, Christian Simon, Shusuke Takahashi, Yuki Mitsufuji

Summary: The first open-source, accelerated sketch-to-audio model, enabling real-time interactive audio generation with fine-grained control; without sacrificing speed.

Automatic Music Sample Identification with Multi-Track Contrastive Learning Alain Riou, Joan Serrà, Yuki Mitsufuji

Summary: A self-supervised approach to detecting sampled content and retrieving its original source, significantly outperforming previous state-of-the-art baselines across diverse genres.

Automatic Music Mixing Using a Generative Model of Effect Embeddings

Eloi Moliner, Marco A. Martínez-Ramírez, Junghyun Koo, Wei-Hsiang Liao, Kin Wai Cheuk, Joan Serrà, Vesa Välimäki, Yuki Mitsufuji

Summary: Introducing MEGAMI (Multitrack Embedding Generative Auto MIxing), a generative framework that models the conditional distribution of professional mixes, moving beyond deterministic regression to handle the inherent subjectivity of mixing.

Break-the-Beat! Controllable MIDI-to-Drum Audio Synthesis

Shuyang Cui, Zhi Zhong, Qiyu Wu, Zachary Novack, Woosung Choi, Keisuke Toyama, Kin Wai Cheuk, Junghyun Koo, Yukara Ikemiya, Christian Simon, Chihiro Nagashima, Shusuke Takahashi

Summary: A model for rendering drum MIDI with the timbre of a reference audio, offering producers a new, controllable tool for creative production.

FoleyBench: A Benchmark For Video-to-Audio Models

Satvik Dixit, Koichi Saito, Zhi Zhong, Yuki Mitsufuji, Chris Donahue

Summary: The first large-scale benchmark explicitly designed for Foley-style video-to-audio evaluation, containing 5,000 video-audio-text triplets with strong coverage of sound categories specific to Foley.

Leveraging Whisper Embeddings for Audio-Based Lyrics Matching

Eleonora Mancini, Joan Serrà, Paolo Torroni, Yuki Mitsufuji

Summary: Introducing WEALY, a fully reproducible pipeline leveraging Whisper decoder embeddings for lyrics matching, achieving performance comparable to state-of-the-art methods while establishing transparent baselines.

Windowed Summary Mixing: An Efficient Fine-Tuning of Self-Supervised Learning Models for Low-Resource Speech Recognition

Aditya Menon, Kumud Tripathi, Raj Gohil, Pankaj Wasnik

Summary: Enhancing the Summary Mixing approach with local neighborhood summaries to improve temporal dependencies while reducing peak VRAM usage by 40%.

Sony AI In the News

Alice Xiang and FHIBE Featured on MIT Sloan Management Review’s Me, Myself and AI Podcast

In a new episode, Alice sat down with Sam Ransbotham, host of the "Me, Myself, and AI" podcast, to discuss the challenge that a lack of ethically sourced datasets presents for evaluating bias in AI systems.

The conversation explores Sony AI’s Fair Human-Centric Image Benchmark (FHIBE), the world’s first publicly available, consent-driven, globally diverse dataset for evaluating bias across a wide range of human-centric computer vision tasks. The discussion digs into why FHIBE was created and how it can enable practitioners to assess fairness using diverse, consent-driven data.

Listen to the podcast here: https://sloanreview.mit.edu/audio/an-industry-benchmark-for-data-fairness-sonys-alice-xiang/

To learn more about FHIBE and access the benchmark, please visit: https://fairnessbenchmark.ai.sony/

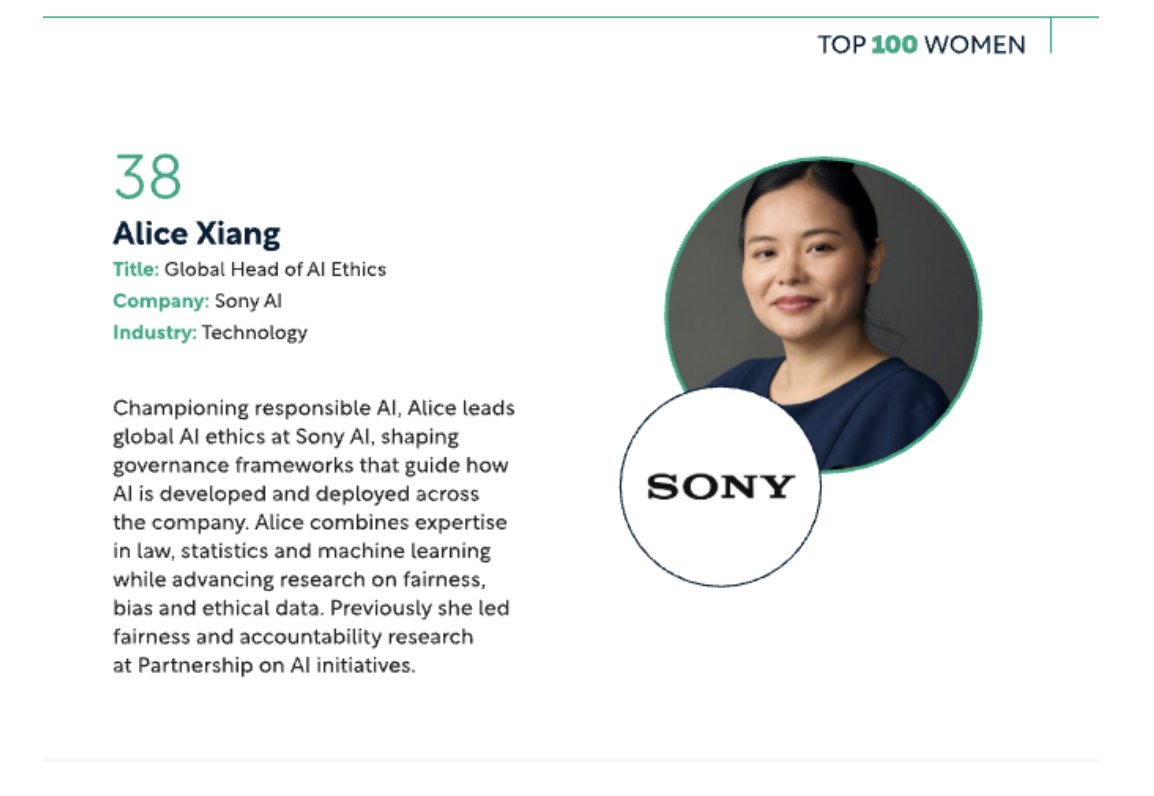

Alice Xiang has been recognised in AI Magazine's “Top 100 Women in AI for 2026”

As Sony Group's Global Head of AI Governance and Lead Research Scientist at Sony AI, Alice Xiang’s work sits at the intersection of ethics, data, and accountability.

FHIBE is a case in point. The Fair Human-Centric Image Benchmark is the first publicly available, consent-driven, globally diverse dataset for evaluating bias in human-centric computer vision. It was published in Nature Magazine. It's free to use. And it's already being adopted across industry. Bias in AI doesn't fix itself. Xiang is building the tools to find it.

Congratulations to Alice Xiang, and all the leaders recognised this year. Discover the full list: AI Magazine Top 100

Latest Blog

March 5, 2026 | Imaging & Sensing, Sony AI

On Writing The Principles of Diffusion Models, A Q&A With Sony AI Researcher, Je…

IntroductionDiffusion models have become a go-to approach for high-quality generation; however, the field can be challenging to navigate once the paper titles and acronyms begin to…

March 2, 2026 | Sony AI

Advancing AI: Highlights from February

February at Sony AI was defined by momentum across global stages, research publications, and conversations about how AI moves from theory into practice.This month spanned responsib…

February 2, 2026 | Sony AI

Advancing AI: Highlights from January

January set the tone for the year ahead at Sony AI, with work that spans foundational research, scientific discovery, and global engagement with the research community.This month’s…