Launching our AI Ethics Research Flagship

AI Ethics

May 12, 2021

I recently joined Sony AI from the Partnership on AI, where I served on the Leadership Team and led a team of researchers focused on fairness, transparency, and accountability in AI. In that role, I had a unique vantage point into how companies, academics, and civil society organizations are tackling AI ethics challenges. I am very excited to bring that experience to my new role at Sony, and am excited to announce that Sony AI will be launching a fourth flagship research area in AI ethics.

Leading the Way in AI Ethics

As a global technology leader, Sony has a unique opportunity to become a leader in AI ethics. In a field that is dominated by U.S.-based tech companies and European regulatory standards, Sony can offer a distinctively diverse and global perspective with its businesses ranging from interactive entertainment, music and film to electronics, sensors, and AI research. The impacts of AI are global, and ethics itself is intrinsically multifaceted and multicultural. In order to develop more ethical AI for an increasingly globalized market, having diverse perspectives around the table will be key.

Sony’s mission at the intersection of entertainment and technology is uniquely aligned with AI ethics. Our goal is to enhance and expand human creativity and curiosity rather than to replace it. Ethics, manifested through harnessing diversity and earning trust through responsible business practices, has long been a key part of Sony’s values. Sony has consistently been named one of the “World’s Most Ethical Companies.” As the company has grown its businesses through the adoption of AI, developing AI ethics practices has also become key. In 2018, Sony established the Sony Group AI Ethics Guidelines, and at the end of last year, Sony announced that it would start screening all of its AI products for ethical risks.

Operationalizing AI Ethics

In order to operationalize these AI ethics guidelines, however, research into techniques and best practices for fair, transparent, and accountable AI will be critical. AI ethics is still a relatively nascent field. In fact, much of the literature on algorithmic fairness (the study of how algorithms can reflect and entrench societal biases) can be traced to a 2016 Propublica article, which found that a recidivism risk assessment tool used in the U.S. criminal justice system was twice as likely to incorrectly label African American defendants as high risk of recidivism. This finding spurred significant debate, as researchers proved an impossibility theorem showing that some common fairness metrics were mutually incompatible—fulfilling one metric would make it impossible to fulfill the other. Since then, the research area of algorithmic fairness has grown tremendously, along with other ethical AI areas like explainable AI, but one aspect has remained constant: the fact that there is no silver bullet.

As both a lawyer and statistician by training, I believe strongly in bridging the technical and sociotechnical when it comes to operationalizing AI ethics. Ethical inquiries are highly contextual, and there are often tensions between different ethical objectives. For example, in order to audit algorithmic systems for bias and to mitigate biases found, it is necessary to consider what demographic groups individuals represented in the data belong to. This can run counter to privacy and anti-discrimination law protections that make it difficult to collect and use demographic data. Threading the needle to implement effective algorithmic bias audits while upholding utmost privacy protections is complex and requires addressing both legal and technical considerations.

In addition to being intrinsically interdisciplinary, AI ethics is highly nuanced and contextual. We will never have a simple checklist that captures all relevant AI ethics concerns or a set of diagnostic tools to certify that an AI system is completely ethical. Research is thus key to diagnosing AI ethics problems and formulating solutions. Unlike more established fields where practitioners simply need to apply the learnings from decades of R&D and real-world case studies, in AI ethics, a closer relationship is needed between researchers and practitioners. At Sony AI, we will focus on conducting cutting-edge research on challenging real-world problems facing Sony’s product teams, which span image sensing, cameras, games, music, pictures and finance, among other areas.

Sony AI is “a place for grand challenges” and envisioning a “bold future.” If you are interested in joining us to help define a future where AI is used to unleash human creativity while achieving fairness, transparency and accountability, please check out our job postings.

Alice Xiang

Senior Research Scientist

Alice Xiang is a Senior Research Scientist at Sony

AI, where she leads research on AI ethics. Alice previously worked as the Head

of Fairness, Transparency, and Accountability Research at the Partnership on

AI, where she led a team of interdisciplinary researchers and a portfolio of

multi-stakeholder research initiatives. She also served as a Visiting Scholar

at Tsinghua University’s Yau Mathematical Sciences Center, where she taught a

course on Algorithmic Fairness, Causal Inference, and the Law. Core areas of

Alice's research include bridging technical and legal approaches to

algorithmic bias, assessing explainability techniques in deployment, and

examining risk assessment tools. Alice’s work sits at the intersection of

social justice and AI; she seeks to ensure that algorithms can be used to

enhance human creativity without entrenching societal inequities.

She

was recognized as one of the 100 Brilliant Women in AI Ethics, and has been

quoted in the Wall Street Journal, MIT Tech Review, Fortune, and VentureBeat,

among others, for her work on algorithmic bias and transparency, criminal

justice risk assessment tools, and AI ethics. She has given guest lectures at

Waterloo, Harvard, SNU Law School, the Simons Institute at Berkeley, among

other universities. Her research has been published in top machine learning

conferences, journals, and law reviews.

Alice is both a lawyer and

statistician, with experience developing machine learning models and serving

as legal counsel for technology companies. Alice holds a Juris Doctor from

Yale Law School, a Master’s in Development Economics from Oxford, a Master’s

in Statistics from Harvard, and a Bachelor’s in Economics from Harvard.

Latest Blog

June 17, 2025 | Events, Sony AI

SXSW Rewind: From GT Sophy to Social Robots—Highlights from Peter Stone and Cynt…

While SXSW 2025 may now be in the rearview mirror, the conversations it ignited continue to resonate. On March 10, 2025, Peter Stone, Chief Scientist at Sony AI and Professor at Th…

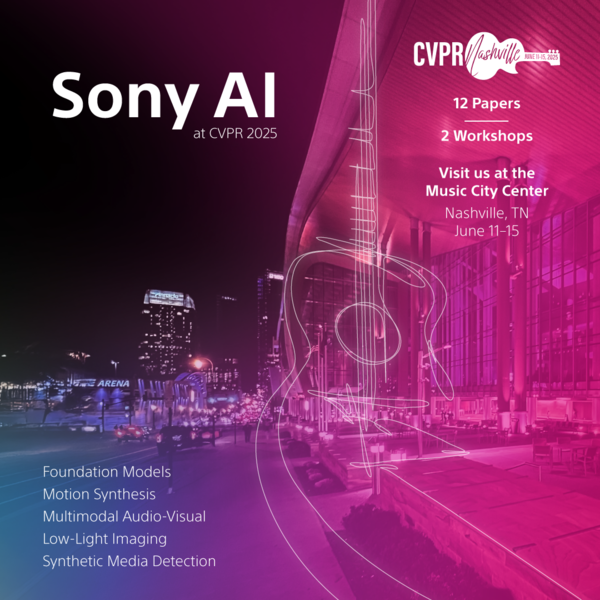

June 12, 2025 | Events, Sony AI

Research That Scales, Adapts, and Creates: Spotlighting Sony AI at CVPR 2025

At this year’s IEEE / CVF Computer Vision and Pattern Recognition Conference (CVPR) in Nashville, TN Sony AI is proud to present 12 accepted papers spanning the main conference and…

June 3, 2025 | Sony AI

Advancing AI: Highlights from May

From research milestones to conference prep, May was a steady month of progress across Sony AI. Our team's advanced work in vision-based reinforcement learning, continued building …